(listFiles.size());

-// for (WorkspaceContentDV fileUploaded : listFiles) {

-// InputStream is;

-// try {

-// is = new URL(fileUploaded.getLink()).openStream();

-// // Creating TempFile

-// TempFile storageTempFile = createTempFileOnStorage(is, fileUploaded.getName());

-// files.add(storageTempFile);

-// } catch (IOException e) {

-// LOG.error("Error on creating temp file from URL: " + fileUploaded.getLink(), e);

-// }

-// }

-// return files;

-// }

+

+ /**

+ * To temp file from remote.

+ *

+ * @param file the file

+ * @return the temp file

+ */

+ public TempFile toTempFileFromRemote(FileUploadedRemote file) {

+ LOG.debug("toTemFilesFromRemote called");

+ if (file == null)

+ return null;

+

+ // Building TempFile

+ TempFile storageTempFile = null;

+ try {

+ InputStream is = new URL(file.getUrl()).openStream();

+ // Creating TempFile

+ storageTempFile = createTempFileOnStorage(is, file.getFileName());

+ } catch (IOException e) {

+ LOG.error("Error on creating temp file from URL: " + file.getUrl(), e);

+ }

+

+ return storageTempFile;

+ }

+

/**

* To JSON.

diff --git a/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/SessionUtil.java b/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/SessionUtil.java

index e7eb43a..a08c3b0 100644

--- a/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/SessionUtil.java

+++ b/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/SessionUtil.java

@@ -12,9 +12,7 @@ import javax.servlet.http.HttpSession;

import org.gcube.application.geoportal.common.model.legacy.Concessione;

import org.gcube.application.geoportal.common.rest.MongoConcessioni;

-import org.gcube.application.geoportalcommon.GeoportalCommon;

import org.gcube.application.geoportalcommon.shared.GNADataEntryConfigProfile;

-import org.gcube.application.geoportalcommon.shared.GNADataViewerConfigProfile;

import org.gcube.application.geoportalcommon.shared.geoportal.project.ProjectDV;

import org.gcube.application.geoportalcommon.shared.geoportal.ucd.RelationshipDefinitionDV;

import org.gcube.common.authorization.library.provider.SecurityTokenProvider;

@@ -43,7 +41,7 @@ public class SessionUtil {

private static final String GNA_DATAENTRY_CONFIG_PROFILE = "GNA_DATAENTRY_CONFIG_PROFILE";

private static final String LATEST_RESULT_SET_SORTED = "LATEST_RESULT_SET_SORTED";

- private static final String GEONA_DATAVIEWER_PROFILE = "GEONA_DATAVIEWER_PROFILE";

+ //private static final String GEONA_DATAVIEWER_PROFILE = "GEONA_DATAVIEWER_PROFILE";

private static final String LIST_OF_CONCESSIONI = "LIST_OF_CONCESSIONI";

private static final String LIST_OF_RELATIONSHIP_DEFINITION = "LIST_OF_RELATIONSHIP_DEFINITION";

@@ -232,28 +230,28 @@ public class SessionUtil {

return listOfConcessioni;

}

- /**

- * Gets the geportal viewer resource profile.

- *

- * @param httpServletRequest the http servlet request

- * @return the geportal viewer resource profile

- * @throws Exception the exception

- */

- public static GNADataViewerConfigProfile getGeportalViewerResourceProfile(HttpServletRequest httpServletRequest)

- throws Exception {

- HttpSession session = httpServletRequest.getSession();

- GNADataViewerConfigProfile geoNaDataViewerProfile = (GNADataViewerConfigProfile) session

- .getAttribute(GEONA_DATAVIEWER_PROFILE);

-

- if (geoNaDataViewerProfile == null) {

- GeoportalCommon gc = new GeoportalCommon();

- geoNaDataViewerProfile = gc.readGNADataViewerConfig(null);

- session.setAttribute(GEONA_DATAVIEWER_PROFILE, geoNaDataViewerProfile);

- }

-

- return geoNaDataViewerProfile;

-

- }

+// /**

+// * Gets the geportal viewer resource profile.

+// *

+// * @param httpServletRequest the http servlet request

+// * @return the geportal viewer resource profile

+// * @throws Exception the exception

+// */

+// public static GNADataViewerConfigProfile getGeportalViewerResourceProfile(HttpServletRequest httpServletRequest)

+// throws Exception {

+// HttpSession session = httpServletRequest.getSession();

+// GNADataViewerConfigProfile geoNaDataViewerProfile = (GNADataViewerConfigProfile) session

+// .getAttribute(GEONA_DATAVIEWER_PROFILE);

+//

+// if (geoNaDataViewerProfile == null) {

+// GeoportalCommon gc = new GeoportalCommon();

+// geoNaDataViewerProfile = gc.readGNADataViewerConfig(null);

+// session.setAttribute(GEONA_DATAVIEWER_PROFILE, geoNaDataViewerProfile);

+// }

+//

+// return geoNaDataViewerProfile;

+//

+// }

/**

* Gets the latest result set sorted.

diff --git a/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/json/JsonMerge.java b/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/json/JsonMerge.java

new file mode 100644

index 0000000..c4289a4

--- /dev/null

+++ b/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/json/JsonMerge.java

@@ -0,0 +1,328 @@

+package org.gcube.portlets.user.geoportaldataentry.server.json;

+

+import java.io.IOException;

+import java.util.HashSet;

+import java.util.Iterator;

+import java.util.Map;

+

+import com.fasterxml.jackson.databind.JsonNode;

+import com.fasterxml.jackson.databind.ObjectMapper;

+import com.fasterxml.jackson.databind.node.ArrayNode;

+import com.fasterxml.jackson.databind.node.ObjectNode;

+

+/**

+ * This class provides methods to merge two json of any nested level into a

+ * single json.

+ *

+ * copied from: https://github.com/hemantsonu20/json-merge

+ *

+ * @maintainer updated by Francesco Mangiacrapa at ISTI-CNR

+ * francesco.mangiacrapa@isti.cnr.it

+ *

+ * Apr 21, 2023

+ */

+public class JsonMerge {

+

+ private static final ObjectMapper OBJECT_MAPPER = new ObjectMapper();

+

+ /**

+ * The Enum MERGE_OPTION.

+ *

+ * @author Francesco Mangiacrapa at ISTI-CNR francesco.mangiacrapa@isti.cnr.it

+ *

+ * Apr 21, 2023

+ */

+ public static enum MERGE_OPTION {

+ MERGE, REPLACE

+ }

+

+ /**

+ * Method to merge two json objects into single json object.

+ *

+ *

+ * It merges two json of any nested level into a single json following below

+ * logic.

+ *

+ *

+ *

+ * Examples

+ * Example 1

+ *

+ * Source Json

+ *

+ *

+ *

+ * {@code

+ * {

+ * "name": "json-merge-src"

+ * }

+ * }

+ *

+ *

+ * Target Json

+ *

+ *

+ *

+ * {@code

+ * {

+ * "name": "json-merge-target"

+ * }

+ * }

+ *

+ *

+ * Output

+ *

+ *

+ *

+ * {@code

+ * {

+ * "name": "json-merge-src"

+ * }

+ * }

+ *

+ *

+ * Example 2

+ *

+ * Source Json

+ *

+ *

+ *

+ * {@code

+ * {

+ * "level1": {

+ * "key1": "SrcValue1"

+ * }

+ * }

+ * }

+ *

+ *

+ * Target Json

+ *

+ *

+ *

+ * {@code

+ * {

+ * "level1": {

+ * "key1": "targetValue1",

+ * "level2": {

+ * "key2": "value2"

+ * }

+ * }

+ * }

+ * }

+ *

+ *

+ * Output

+ *

+ *

+ *

+ * {@code

+ * {

+ * "level1": {

+ * "key1": "SrcValue1",

+ * "level2": {

+ * "key2": "value2"

+ * }

+ * }

+ * }

+ * }

+ *

+ *

+ * @param srcJsonStr source json string

+ * @param targetJsonStr target json string

+ * @param option the option

+ * @return merged json as a string

+ */

+ public static String merge(String srcJsonStr, String targetJsonStr, MERGE_OPTION option) {

+

+ try {

+ if (option == null)

+ option = MERGE_OPTION.MERGE;

+

+ JsonNode srcNode = OBJECT_MAPPER.readTree(srcJsonStr);

+ JsonNode targetNode = OBJECT_MAPPER.readTree(targetJsonStr);

+ JsonNode result = merge(srcNode, targetNode, option);

+ return OBJECT_MAPPER.writeValueAsString(result);

+ } catch (IOException e) {

+ throw new JsonMergeException("Unable to merge json", e);

+ }

+ }

+

+ /**

+ * Merge.

+ *

+ * @param srcNode the src node

+ * @param targetNode the target node

+ * @param option the option

+ * @return the json node

+ */

+ public static JsonNode merge(JsonNode srcNode, JsonNode targetNode, MERGE_OPTION option) {

+

+ if (option == null)

+ option = MERGE_OPTION.MERGE;

+

+ // if both nodes are object node, merged object node is returned

+ if (srcNode.isObject() && targetNode.isObject()) {

+ return merge((ObjectNode) srcNode, (ObjectNode) targetNode, option);

+ }

+

+ // if both nodes are array node, merged array node is returned

+ if (srcNode.isArray() && targetNode.isArray()) {

+ return mergeArray((ArrayNode) srcNode, (ArrayNode) targetNode, option);

+ }

+

+ // special case when src node is null

+ if (srcNode.isNull()) {

+ return targetNode;

+ }

+

+ return srcNode;

+ }

+

+ /**

+ * Merge.

+ *

+ * @param srcNode the src node

+ * @param targetNode the target node

+ * @param option the option

+ * @return the object node

+ */

+ public static ObjectNode merge(ObjectNode srcNode, ObjectNode targetNode, MERGE_OPTION option) {

+

+ ObjectNode result = OBJECT_MAPPER.createObjectNode();

+

+ Iterator> srcItr = srcNode.fields();

+ while (srcItr.hasNext()) {

+

+ Map.Entry entry = srcItr.next();

+

+ // check key in src json exists in target json or not at same level

+ if (targetNode.has(entry.getKey())) {

+ result.set(entry.getKey(), merge(entry.getValue(), targetNode.get(entry.getKey()), option));

+ } else {

+ // if key in src json doesn't exist in target json, just copy the same in result

+ result.set(entry.getKey(), entry.getValue());

+ }

+ }

+

+ // copy fields from target json into result which were missing in src json

+ Iterator> targetItr = targetNode.fields();

+ while (targetItr.hasNext()) {

+ Map.Entry entry = targetItr.next();

+ if (!result.has(entry.getKey())) {

+ result.set(entry.getKey(), entry.getValue());

+ }

+ }

+ return result;

+ }

+

+ /**

+ * Merge.

+ *

+ * @param srcNode the src node

+ * @param targetNode the target node

+ * @param option the option

+ * @return the array node

+ */

+ public static ArrayNode mergeArray(ArrayNode srcNode, ArrayNode targetNode, MERGE_OPTION option) {

+ ArrayNode result = OBJECT_MAPPER.createArrayNode();

+

+ switch (option) {

+ case REPLACE:

+ //Replacing source json value as result

+ return result.addAll(srcNode);

+ //return result.addAll(srcNode).addAll(targetNode);

+ default:

+ return mergeSet(srcNode, targetNode);

+

+ }

+ }

+

+ /**

+ * Added by Francesco Mangiacrapa Merge set.

+ *

+ * @param srcNode the src node

+ * @param targetNode the target node

+ * @return the array node

+ */

+ public static ArrayNode mergeSet(ArrayNode srcNode, ArrayNode targetNode) {

+ ArrayNode result = OBJECT_MAPPER.createArrayNode();

+

+ HashSet set = new HashSet<>();

+

+ set = toHashSet(set, srcNode);

+ set = toHashSet(set, targetNode);

+

+ Iterator itr = set.iterator();

+ while (itr != null && itr.hasNext()) {

+ JsonNode arrayValue = itr.next();

+ result.add(arrayValue);

+ }

+

+ return result;

+ }

+

+ /**

+ * To hash set.

+ *

+ * @param set the set

+ * @param srcNode the src node

+ * @return the hash set

+ */

+ public static HashSet toHashSet(HashSet set, ArrayNode srcNode) {

+ if (srcNode != null) {

+ Iterator itr = srcNode.elements();

+ while (itr != null && itr.hasNext()) {

+ JsonNode arrayValue = itr.next();

+ set.add(arrayValue);

+ }

+ }

+ return set;

+ }

+}

\ No newline at end of file

diff --git a/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/json/JsonMergeException.java b/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/json/JsonMergeException.java

new file mode 100644

index 0000000..d3307e4

--- /dev/null

+++ b/src/main/java/org/gcube/portlets/user/geoportaldataentry/server/json/JsonMergeException.java

@@ -0,0 +1,20 @@

+package org.gcube.portlets.user.geoportaldataentry.server.json;

+

+/**

+ * Exception to be thrown in case of any error occured while merging two json.

+ *

+ */

+public class JsonMergeException extends RuntimeException {

+

+ public JsonMergeException() {

+ super();

+ }

+

+ public JsonMergeException(String msg) {

+ super(msg);

+ }

+

+ public JsonMergeException(String msg, Throwable th) {

+ super(msg, th);

+ }

+}

diff --git a/src/main/resources/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml b/src/main/resources/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

index 66f97a0..2611f74 100644

--- a/src/main/resources/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

+++ b/src/main/resources/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

@@ -12,28 +12,31 @@

+

+

+

+

+

+

+

-

-

-

-

-

-

-

+

+

-

-

+

-

+

+

-

-

-

-

-

-

+

+

+

diff --git a/src/test/java/org/gcube/application/Service_Tests.java b/src/test/java/org/gcube/application/Service_Tests.java

new file mode 100644

index 0000000..1817a2a

--- /dev/null

+++ b/src/test/java/org/gcube/application/Service_Tests.java

@@ -0,0 +1,231 @@

+package org.gcube.application;

+

+import java.io.File;

+import java.io.FileInputStream;

+import java.io.FileOutputStream;

+import java.io.IOException;

+import java.io.InputStream;

+import java.net.URL;

+import java.nio.file.Files;

+import java.nio.file.Path;

+import java.nio.file.Paths;

+import java.nio.file.StandardCopyOption;

+import java.util.List;

+import java.util.Properties;

+

+import javax.ws.rs.WebApplicationException;

+import javax.ws.rs.core.Response;

+

+import org.gcube.application.geoportal.common.model.JSONPathWrapper;

+import org.gcube.application.geoportal.common.model.document.Project;

+import org.gcube.application.geoportal.common.model.document.access.Access;

+import org.gcube.application.geoportal.common.model.document.access.AccessPolicy;

+import org.gcube.application.geoportalcommon.geoportal.GeoportalClientCaller;

+import org.gcube.application.geoportalcommon.geoportal.ProjectsCaller;

+import org.gcube.application.geoportalcommon.geoportal.UseCaseDescriptorCaller;

+import org.gcube.common.authorization.library.provider.SecurityTokenProvider;

+import org.gcube.common.scope.api.ScopeProvider;

+import org.gcube.portlets.user.geoportaldataentry.server.MongoServiceUtil;

+import org.gcube.portlets.widgets.mpformbuilder.shared.upload.FileUploadedRemote;

+import org.junit.Before;

+import org.junit.Test;

+import org.slf4j.Logger;

+import org.slf4j.LoggerFactory;

+

+import com.google.gwt.user.client.Random;

+

+public class Service_Tests {

+

+ private static final String GCUBE_CONFIG_PROPERTIES_FILENAME = "gcube_config.properties";

+ // APP Working Directory + /src/test/resources must be the location of

+ // gcube_config.properties

+ private static String gcube_config_path = String.format("%s/%s",

+ System.getProperty("user.dir") + "/src/test/resources", GCUBE_CONFIG_PROPERTIES_FILENAME);

+ private static String CONTEXT;

+ private static String TOKEN;

+

+ private UseCaseDescriptorCaller clientUCD = null;

+ private ProjectsCaller clientPrj = null;

+

+ private static String PROFILE_ID = "profiledConcessioni";

+ private static String PROJECT_ID = "644a66e944aad51c80409a3b";

+

+ private static String MY_LOGIN = "francesco.mangiacrapa";

+

+ public static final String JSON_$_POINTER = "$";

+ private static final Logger LOG = LoggerFactory.getLogger(Service_Tests.class);

+

+ /**

+ * Read context settings.

+ */

+ public static void readContextSettings() {

+

+ try (InputStream input = new FileInputStream(gcube_config_path)) {

+

+ Properties prop = new Properties();

+

+ // load a properties file

+ prop.load(input);

+

+ CONTEXT = prop.getProperty("CONTEXT");

+ TOKEN = prop.getProperty("TOKEN");

+ // get the property value and print it out

+ System.out.println("CONTEXT: " + CONTEXT);

+ System.out.println("TOKEN: " + TOKEN);

+

+ } catch (IOException ex) {

+ ex.printStackTrace();

+ }

+ }

+

+ //@Before

+ public void init() {

+ readContextSettings();

+ ScopeProvider.instance.set(CONTEXT);

+ SecurityTokenProvider.instance.set(TOKEN);

+ clientPrj = GeoportalClientCaller.projects();

+ clientUCD = GeoportalClientCaller.useCaseDescriptors();

+ }

+

+ //@Test

+ public void deleteFileSet_ServiceTest() throws Exception {

+ ScopeProvider.instance.set(CONTEXT);

+ SecurityTokenProvider.instance.set(TOKEN);

+

+ boolean ignore_errors = false;

+ String path = "$.abstractRelazione.filesetIta";

+

+ Project doc = clientPrj.getProjectByID(PROFILE_ID, PROJECT_ID);

+

+// JSONPathWrapper wrapper = new JSONPathWrapper(doc.getTheDocument().toJson());

+// List matchingPaths = wrapper.getMatchingPaths(path);

+//

+// LOG.info("matchingPaths is: " + matchingPaths);

+//

+// String error = null;

+// if (matchingPaths.isEmpty()) {

+// error = "No Registered FileSet found at " + path;

+// if (!ignore_errors) {

+// throw new WebApplicationException(error, Response.Status.BAD_REQUEST);

+// }

+// }

+// if (matchingPaths.size() > 1 && !ignore_errors) {

+// error = "Multiple Fileset (" + matchingPaths.size() + ") matching " + path;

+// if (!ignore_errors)

+// throw new WebApplicationException(error, Response.Status.BAD_REQUEST);

+// }

+//

+// if (error != null && ignore_errors) {

+// LOG.info("Error detected {}. Ignoring it and returning input doc", error);

+//

+// }

+//

+// List +

## Documentation

-N/A

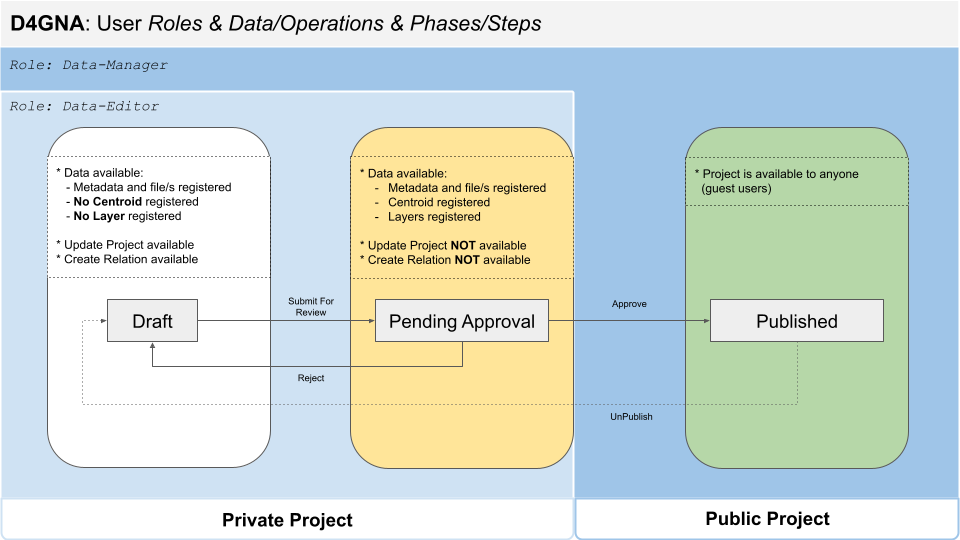

+D4GNA Use Case - 3 Phase Lifecycle

+

+

+

## Documentation

-N/A

+D4GNA Use Case - 3 Phase Lifecycle

+

+ +

+Geoportal Service Documentation is available at [gCube CMS Suite](https://geoportal.d4science.org/geoportal-service/docs/index.html)

## Change log

diff --git a/src/main/java/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml b/src/main/java/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

index 8e50a33..2611f74 100644

--- a/src/main/java/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

+++ b/src/main/java/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

@@ -13,9 +13,17 @@

+

+Geoportal Service Documentation is available at [gCube CMS Suite](https://geoportal.d4science.org/geoportal-service/docs/index.html)

## Change log

diff --git a/src/main/java/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml b/src/main/java/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

index 8e50a33..2611f74 100644

--- a/src/main/java/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

+++ b/src/main/java/org/gcube/portlets/user/geoportaldataentry/GeoPortalDataEntryApp.gwt.xml

@@ -13,9 +13,17 @@