Initial commit

This commit is contained in:

commit

4aec1a7efd

|

|

@ -0,0 +1,85 @@

|

|||

# Container names

|

||||

NGINX_CONTAINER_NAME=nginx

|

||||

REDIS_CONTAINER_NAME=redis

|

||||

POSTGRESQL_CONTAINER_NAME=db

|

||||

SOLR_CONTAINER_NAME=solr

|

||||

DATAPUSHER_CONTAINER_NAME=datapusher

|

||||

CKAN_CONTAINER_NAME=ckan

|

||||

WORKER_CONTAINER_NAME=ckan-worker

|

||||

|

||||

# Host Ports

|

||||

CKAN_PORT_HOST=5000

|

||||

NGINX_PORT_HOST=81

|

||||

NGINX_SSLPORT_HOST=8443

|

||||

|

||||

# CKAN databases

|

||||

POSTGRES_USER=postgres

|

||||

POSTGRES_PASSWORD=postgres

|

||||

POSTGRES_DB=postgres

|

||||

POSTGRES_HOST=db

|

||||

CKAN_DB_USER=ckandbuser

|

||||

CKAN_DB_PASSWORD=ckandbpassword

|

||||

CKAN_DB=ckandb

|

||||

DATASTORE_READONLY_USER=datastore_ro

|

||||

DATASTORE_READONLY_PASSWORD=datastore

|

||||

DATASTORE_DB=datastore

|

||||

CKAN_SQLALCHEMY_URL=postgresql://ckandbuser:ckandbpassword@db/ckandb

|

||||

CKAN_DATASTORE_WRITE_URL=postgresql://ckandbuser:ckandbpassword@db/datastore

|

||||

CKAN_DATASTORE_READ_URL=postgresql://datastore_ro:datastore@db/datastore

|

||||

|

||||

# Test database connections

|

||||

TEST_CKAN_SQLALCHEMY_URL=postgres://ckan:ckan@db/ckan_test

|

||||

TEST_CKAN_DATASTORE_WRITE_URL=postgresql://ckan:ckan@db/datastore_test

|

||||

TEST_CKAN_DATASTORE_READ_URL=postgresql://datastore_ro:datastore@db/datastore_test

|

||||

|

||||

# Dev settings

|

||||

USE_HTTPS_FOR_DEV=false

|

||||

|

||||

# CKAN core

|

||||

CKAN_VERSION=2.10.0

|

||||

CKAN_SITE_ID=default

|

||||

CKAN_SITE_URL=https://localhost:8443

|

||||

CKAN_PORT=5000

|

||||

CKAN_PORT_HOST=5000

|

||||

CKAN___BEAKER__SESSION__SECRET=CHANGE_ME

|

||||

# See https://docs.ckan.org/en/latest/maintaining/configuration.html#api-token-settings

|

||||

CKAN___API_TOKEN__JWT__ENCODE__SECRET=string:CHANGE_ME

|

||||

CKAN___API_TOKEN__JWT__DECODE__SECRET=string:CHANGE_ME

|

||||

CKAN_SYSADMIN_NAME=ckan_admin

|

||||

CKAN_SYSADMIN_PASSWORD=test1234

|

||||

CKAN_SYSADMIN_EMAIL=your_email@example.com

|

||||

CKAN_STORAGE_PATH=/var/lib/ckan

|

||||

CKAN_SMTP_SERVER=smtp.corporateict.domain:25

|

||||

CKAN_SMTP_STARTTLS=True

|

||||

CKAN_SMTP_USER=user

|

||||

CKAN_SMTP_PASSWORD=pass

|

||||

CKAN_SMTP_MAIL_FROM=ckan@localhost

|

||||

TZ=UTC

|

||||

|

||||

# Solr

|

||||

SOLR_IMAGE_VERSION=2.10-solr9

|

||||

CKAN_SOLR_URL=http://solr:8983/solr/ckan

|

||||

TEST_CKAN_SOLR_URL=http://solr:8983/solr/ckan

|

||||

|

||||

# Redis

|

||||

REDIS_VERSION=6

|

||||

CKAN_REDIS_URL=redis://redis:6379/1

|

||||

TEST_CKAN_REDIS_URL=redis://redis:6379/1

|

||||

|

||||

# Datapusher

|

||||

DATAPUSHER_VERSION=0.0.20

|

||||

CKAN_DATAPUSHER_URL=http://datapusher:8800

|

||||

CKAN__DATAPUSHER__CALLBACK_URL_BASE=http://ckan:5000

|

||||

DATAPUSHER_REWRITE_RESOURCES=True

|

||||

DATAPUSHER_REWRITE_URL=http://ckan:5000

|

||||

|

||||

# NGINX

|

||||

NGINX_PORT=80

|

||||

NGINX_SSLPORT=443

|

||||

|

||||

# Extensions

|

||||

CKAN__PLUGINS="envvars image_view text_view recline_view datastore datapusher"

|

||||

CKAN__HARVEST__MQ__TYPE=redis

|

||||

CKAN__HARVEST__MQ__HOSTNAME=redis

|

||||

CKAN__HARVEST__MQ__PORT=6379

|

||||

CKAN__HARVEST__MQ__REDIS_DB=1

|

||||

|

|

@ -0,0 +1,48 @@

|

|||

name: Build CKAN Docker

|

||||

|

||||

on:

|

||||

# Trigger the workflow on push or pull request,

|

||||

# but only for the master branch

|

||||

push:

|

||||

branches:

|

||||

- master

|

||||

pull_request:

|

||||

branches:

|

||||

- master

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

|

||||

- name: NGINX build

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: ./nginx

|

||||

file: ./nginx/Dockerfile

|

||||

push: false

|

||||

tags: kowhai/ckan-docker-nginx:test-build-only

|

||||

|

||||

- name: PostgreSQL build

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: ./postgresql

|

||||

file: ./postgresql/Dockerfile

|

||||

push: false

|

||||

tags: kowhai/ckan-docker-postgresql:test-build-only

|

||||

|

||||

- name: CKAN build

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: ./ckan

|

||||

file: ./ckan/Dockerfile

|

||||

push: false

|

||||

tags: kowhai/ckan-docker-ckan:test-build-only

|

||||

|

||||

|

|

@ -0,0 +1,10 @@

|

|||

# generic

|

||||

.DS_Store

|

||||

.vagrant

|

||||

|

||||

# code & config

|

||||

_service-provider/*

|

||||

_solr/schema.xml

|

||||

_src/*

|

||||

local/*

|

||||

.env

|

||||

|

|

@ -0,0 +1,287 @@

|

|||

# Docker Compose setup for CKAN

|

||||

|

||||

|

||||

* [Overview](#overview)

|

||||

* [Installing Docker](#installing-docker)

|

||||

* [docker compose vs docker-compose](#docker-compose-vs-docker-compose)

|

||||

* [Install CKAN plus dependencies](#install-ckan-plus-dependencies)

|

||||

* [Development mode](#development-mode)

|

||||

* [Create an extension](#create-an-extension)

|

||||

* [Running HTTPS on development mode](#running-https-on-development-mode)

|

||||

* [CKAN images](#ckan-images)

|

||||

* [Extending the base images](#extending-the-base-images)

|

||||

* [Applying patches](#applying-patches)

|

||||

* [Debugging with pdb](#pdb)

|

||||

* [Datastore and Datapusher](#Datastore-and-datapusher)

|

||||

* [NGINX](#nginx)

|

||||

* [The ckanext-envvars extension](#envvars)

|

||||

* [The CKAN_SITE_URL parameter](#CKAN_SITE_URL)

|

||||

* [Changing the base image](#Changing-the-base-image)

|

||||

* [Replacing DataPusher with XLoader](#Replacing-DataPusher-with-XLoader)

|

||||

|

||||

|

||||

## 1. Overview

|

||||

|

||||

This is a set of configuration and setup files to run a CKAN site.

|

||||

|

||||

The CKAN images used are from the official CKAN [ckan-docker](https://github.com/ckan/ckan-docker-base) repo

|

||||

|

||||

The non-CKAN images are as follows:

|

||||

|

||||

* DataPusher: CKAN's [pre-configured DataPusher image](https://github.com/ckan/ckan-base/tree/main/datapusher).

|

||||

* PostgreSQL: Official PostgreSQL image. Database files are stored in a named volume.

|

||||

* Solr: CKAN's [pre-configured Solr image](https://github.com/ckan/ckan-solr). Index data is stored in a named volume.

|

||||

* Redis: standard Redis image

|

||||

* NGINX: latest stable nginx image that includes SSL and Non-SSL endpoints

|

||||

|

||||

The site is configured using environment variables that you can set in the `.env` file.

|

||||

|

||||

## 2. Installing Docker

|

||||

|

||||

Install Docker by following the following instructions: [Install Docker Engine on Ubuntu](https://docs.docker.com/engine/install/ubuntu/)

|

||||

|

||||

To verify a successful Docker installation, run `docker run hello-world` and `docker version`. These commands should output

|

||||

versions for client and server.

|

||||

|

||||

## 3. docker compose *vs* docker-compose

|

||||

|

||||

All Docker Compose commands in this README will use the V2 version of Compose ie: `docker compose`. The older version (V1)

|

||||

used the `docker-compose` command. Please see [Docker Compose](https://docs.docker.com/compose/compose-v2/) for

|

||||

more information.

|

||||

|

||||

## 4. Install (build and run) CKAN plus dependencies

|

||||

|

||||

#### Base mode

|

||||

|

||||

Use this if you are a maintainer and will not be making code changes to CKAN or to CKAN extensions

|

||||

|

||||

Copy the included `.env.example` and rename it to `.env`. Modify it depending on your own needs.

|

||||

|

||||

Please note that when accessing CKAN directly (via a browser) ie: not going through NGINX you will need to make sure you have "ckan" set up

|

||||

to be an alias to localhost in the local hosts file. Either that or you will need to change the `.env` entry for CKAN_SITE_URL

|

||||

|

||||

Using the default values on the `.env.example` file will get you a working CKAN instance. There is a sysadmin user created by default with the values defined in `CKAN_SYSADMIN_NAME` and `CKAN_SYSADMIN_PASSWORD`(`ckan_admin` and `test1234` by default). This should be obviously changed before running this setup as a public CKAN instance.

|

||||

|

||||

To build the images:

|

||||

|

||||

docker compose build

|

||||

|

||||

To start the containers:

|

||||

|

||||

docker compose up

|

||||

|

||||

This will start up the containers in the current window. By default the containers will log direct to this window with each container

|

||||

using a different colour. You could also use the -d "detach mode" option ie: `docker compose up -d` if you wished to use the current

|

||||

window for something else.

|

||||

|

||||

At the end of the container start sequence there should be 6 containers running

|

||||

|

||||

|

||||

|

||||

After this step, CKAN should be running at `CKAN_SITE_URL`.

|

||||

|

||||

|

||||

#### Development mode

|

||||

|

||||

Use this mode if you are making code changes to CKAN and either creating new extensions or making code changes to existing extensions. This mode also uses the `.env` file for config options.

|

||||

|

||||

To develop local extensions use the `docker-compose.dev.yml` file:

|

||||

|

||||

To build the images:

|

||||

|

||||

docker compose -f docker-compose.dev.yml build

|

||||

|

||||

To start the containers:

|

||||

|

||||

docker compose -f docker-compose.dev.yml up

|

||||

|

||||

See [CKAN Images](#ckan-images) for more details of what happens when using development mode.

|

||||

|

||||

|

||||

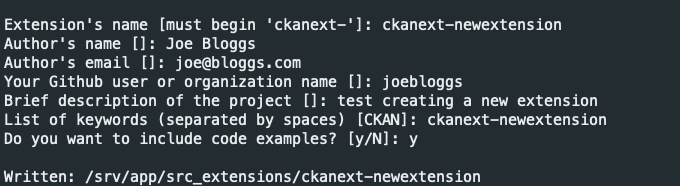

##### Create an extension

|

||||

|

||||

You can use the ckan [extension](https://docs.ckan.org/en/latest/extensions/tutorial.html#creating-a-new-extension) instructions to create a CKAN extension, only executing the command inside the CKAN container and setting the mounted `src/` folder as output:

|

||||

|

||||

docker compose -f docker-compose.dev.yml exec ckan-dev /bin/sh -c "ckan generate extension --output-dir /srv/app/src_extensions"

|

||||

|

||||

|

||||

|

||||

|

||||

The new extension files and directories are created in the `/srv/app/src_extensions/` folder in the running container. They will also exist in the local src/ directory as local `/src` directory is mounted as `/srv/app/src_extensions/` on the ckan container. You might need to change the owner of its folder to have the appropiate permissions.

|

||||

|

||||

##### Running HTTPS on development mode

|

||||

|

||||

Sometimes is useful to run your local development instance under HTTPS, for instance if you are using authentication extensions like [ckanext-saml2auth](https://github.com/keitaroinc/ckanext-saml2auth). To enable it, set the following in your `.env` file:

|

||||

|

||||

USE_HTTPS_FOR_DEV=true

|

||||

|

||||

and update the site URL setting:

|

||||

|

||||

CKAN_SITE_URL=https://localhost:5000

|

||||

|

||||

After recreating the `ckan-dev` container, you should be able to access CKAN at https://localhost:5000

|

||||

|

||||

|

||||

## 5. CKAN images

|

||||

|

||||

|

||||

|

||||

|

||||

The Docker image config files used to build your CKAN project are located in the `ckan/` folder. There are two Docker files:

|

||||

|

||||

* `Dockerfile`: this is based on `ckan/ckan-base:<version>`, a base image located in the DockerHub repository, that has CKAN installed along with all its dependencies, properly configured and running on [uWSGI](https://uwsgi-docs.readthedocs.io/en/latest/) (production setup)

|

||||

* `Dockerfile.dev`: this is based on `ckan/ckan-base:<version>-dev` also located located in the DockerHub repository, and extends `ckan/ckan-base:<version>` to include:

|

||||

|

||||

* Any extension cloned on the `src` folder will be installed in the CKAN container when booting up Docker Compose (`docker compose up`). This includes installing any requirements listed in a `requirements.txt` (or `pip-requirements.txt`) file and running `python setup.py develop`.

|

||||

* CKAN is started running this: `/usr/bin/ckan -c /srv/app/ckan.ini run -H 0.0.0.0`.

|

||||

* Make sure to add the local plugins to the `CKAN__PLUGINS` env var in the `.env` file.

|

||||

|

||||

* Any custom changes to the scripts run during container start up can be made to scripts in the `setup/` directory. For instance if you wanted to change the port on which CKAN runs you would need to make changes to the Docker Compose yaml file, and the `start_ckan.sh.override` file. Then you would need to add the following line to the Dockerfile ie: `COPY setup/start_ckan.sh.override ${APP_DIR}/start_ckan.sh`. The `start_ckan.sh` file in the locally built image would override the `start_ckan.sh` file included in the base image

|

||||

|

||||

## 6. Extending the base images

|

||||

|

||||

You can modify the docker files to build your own customized image tailored to your project, installing any extensions and extra requirements needed. For example here is where you would update to use a different CKAN base image ie: `ckan/ckan-base:<new version>`

|

||||

|

||||

To perform extra initialization steps you can add scripts to your custom images and copy them to the `/docker-entrypoint.d` folder (The folder should be created for you when you build the image). Any `*.sh` and `*.py` file in that folder will be executed just after the main initialization script ([`prerun.py`](https://github.com/ckan/ckan-docker-base/blob/main/ckan-2.9/base/setup/prerun.py)) is executed and just before the web server and supervisor processes are started.

|

||||

|

||||

For instance, consider the following custom image:

|

||||

|

||||

```

|

||||

ckan

|

||||

├── docker-entrypoint.d

|

||||

│ └── setup_validation.sh

|

||||

├── Dockerfile

|

||||

└── Dockerfile.dev

|

||||

|

||||

```

|

||||

|

||||

We want to install an extension like [ckanext-validation](https://github.com/frictionlessdata/ckanext-validation) that needs to create database tables on startup time. We create a `setup_validation.sh` script in a `docker-entrypoint.d` folder with the necessary commands:

|

||||

|

||||

```bash

|

||||

#!/bin/bash

|

||||

|

||||

# Create DB tables if not there

|

||||

ckan -c /srv/app/ckan.ini validation init-db

|

||||

```

|

||||

|

||||

And then in our `Dockerfile.dev` file we install the extension and copy the initialization scripts:

|

||||

|

||||

```Dockerfile

|

||||

FROM ckan/ckan-base:2.9.7-dev

|

||||

|

||||

RUN pip install -e git+https://github.com/frictionlessdata/ckanext-validation.git#egg=ckanext-validation && \

|

||||

pip install -r https://raw.githubusercontent.com/frictionlessdata/ckanext-validation/master/requirements.txt

|

||||

|

||||

COPY docker-entrypoint.d/* /docker-entrypoint.d/

|

||||

```

|

||||

|

||||

NB: There are a number of extension examples commented out in the Dockerfile.dev file

|

||||

|

||||

## 7. Applying patches

|

||||

|

||||

When building your project specific CKAN images (the ones defined in the `ckan/` folder), you can apply patches

|

||||

to CKAN core or any of the built extensions. To do so create a folder inside `ckan/patches` with the name of the

|

||||

package to patch (ie `ckan` or `ckanext-??`). Inside you can place patch files that will be applied when building

|

||||

the images. The patches will be applied in alphabetical order, so you can prefix them sequentially if necessary.

|

||||

|

||||

For instance, check the following example image folder:

|

||||

|

||||

```

|

||||

ckan

|

||||

├── patches

|

||||

│ ├── ckan

|

||||

│ │ ├── 01_datasets_per_page.patch

|

||||

│ │ ├── 02_groups_per_page.patch

|

||||

│ │ ├── 03_or_filters.patch

|

||||

│ └── ckanext-harvest

|

||||

│ └── 01_resubmit_objects.patch

|

||||

├── setup

|

||||

├── Dockerfile

|

||||

└── Dockerfile.dev

|

||||

|

||||

```

|

||||

|

||||

## 8. pdb

|

||||

|

||||

Add these lines to the `ckan-dev` service in the docker-compose.dev.yml file

|

||||

|

||||

|

||||

|

||||

Debug with pdb (example) - Interact with `docker attach $(docker container ls -qf name=ckan)`

|

||||

|

||||

command: `python -m pdb /usr/lib/ckan/venv/bin/ckan --config /srv/app/ckan.ini run --host 0.0.0.0 --passthrough-errors`

|

||||

|

||||

## 9. Datastore and datapusher

|

||||

|

||||

The Datastore database and user is created as part of the entrypoint scripts for the db container. There is also a Datapusher container

|

||||

running the latest version of Datapusher.

|

||||

|

||||

## 10. NGINX

|

||||

|

||||

The base Docker Compose configuration uses an NGINX image as the front-end (ie: reverse proxy). It includes HTTPS running on port number 8443. A "self-signed" SSL certificate is generated as part of the ENTRYPOINT. The NGINX `server_name` directive and the `CN` field in the SSL certificate have been both set to 'localhost'. This should obviously not be used for production.

|

||||

|

||||

Creating the SSL cert and key files as follows:

|

||||

`openssl req -new -newkey rsa:4096 -days 365 -nodes -x509 -subj "/C=DE/ST=Berlin/L=Berlin/O=None/CN=localhost" -keyout ckan-local.key -out ckan-local.crt`

|

||||

The `ckan-local.*` files will then need to be moved into the nginx/setup/ directory

|

||||

|

||||

## 11. envvars

|

||||

|

||||

The ckanext-envvars extension is used in the CKAN Docker base repo to build the base images.

|

||||

This extension checks for environmental variables conforming to an expected format and updates the corresponding CKAN config settings with its value.

|

||||

|

||||

For the extension to correctly identify which env var keys map to the format used for the config object, env var keys should be formatted in the following way:

|

||||

|

||||

All uppercase

|

||||

Replace periods ('.') with two underscores ('__')

|

||||

Keys must begin with 'CKAN' or 'CKANEXT', if they do not you can prepend them with '`CKAN___`'

|

||||

|

||||

For example:

|

||||

|

||||

* `CKAN__PLUGINS="envvars image_view text_view recline_view datastore datapusher"`

|

||||

* `CKAN__DATAPUSHER__CALLBACK_URL_BASE=http://ckan:5000`

|

||||

* `CKAN___BEAKER__SESSION__SECRET=CHANGE_ME`

|

||||

|

||||

These parameters can be added to the `.env` file

|

||||

|

||||

For more information please see [ckanext-envvars](https://github.com/okfn/ckanext-envvars)

|

||||

|

||||

## 12. CKAN_SITE_URL

|

||||

|

||||

For convenience the CKAN_SITE_URL parameter should be set in the .env file. For development it can be set to http://localhost:5000 and non-development set to https://localhost:8443

|

||||

|

||||

## 13. Manage new users

|

||||

|

||||

1. Create a new user from the Docker host, for example to create a new user called 'admin'

|

||||

|

||||

`docker exec -it <container-id> ckan -c ckan.ini user add admin email=admin@localhost`

|

||||

|

||||

To delete the 'admin' user

|

||||

|

||||

`docker exec -it <container-id> ckan -c ckan.ini user remove admin`

|

||||

|

||||

2. Create a new user from within the ckan container. You will need to get a session on the running container

|

||||

|

||||

`ckan -c ckan.ini user add admin email=admin@localhost`

|

||||

|

||||

To delete the 'admin' user

|

||||

|

||||

`ckan -c ckan.ini user remove admin`

|

||||

|

||||

## 14. Changing the base image

|

||||

|

||||

The base image used in the CKAN Dockerfile and Dockerfile.dev can be changed so a different DockerHub image is used eg: ckan/ckan-base:2.9.9

|

||||

could be used instead of ckan/ckan-base:2.10.1

|

||||

|

||||

## 15. Replacing DataPusher with XLoader

|

||||

|

||||

Check out the wiki page for this: https://github.com/ckan/ckan-docker/wiki/Replacing-DataPusher-with-XLoader

|

||||

|

||||

Copying and License

|

||||

-------------------

|

||||

|

||||

This material is copyright (c) 2006-2023 Open Knowledge Foundation and contributors.

|

||||

|

||||

It is open and licensed under the GNU Affero General Public License (AGPL) v3.0

|

||||

whose full text may be found at:

|

||||

|

||||

http://www.fsf.org/licensing/licenses/agpl-3.0.html

|

||||

|

|

@ -0,0 +1,19 @@

|

|||

FROM ckan/ckan-base:2.10.4

|

||||

|

||||

# Install any extensions needed by your CKAN instance

|

||||

# See Dockerfile.dev for more details and examples

|

||||

|

||||

# Copy custom initialization scripts

|

||||

COPY docker-entrypoint.d/* /docker-entrypoint.d/

|

||||

|

||||

# Apply any patches needed to CKAN core or any of the built extensions (not the

|

||||

# runtime mounted ones)

|

||||

COPY patches ${APP_DIR}/patches

|

||||

|

||||

RUN for d in $APP_DIR/patches/*; do \

|

||||

if [ -d $d ]; then \

|

||||

for f in `ls $d/*.patch | sort -g`; do \

|

||||

cd $SRC_DIR/`basename "$d"` && echo "$0: Applying patch $f to $SRC_DIR/`basename $d`"; patch -p1 < "$f" ; \

|

||||

done ; \

|

||||

fi ; \

|

||||

done

|

||||

|

|

@ -0,0 +1,97 @@

|

|||

FROM ckan/ckan-dev:2.10.4

|

||||

|

||||

RUN pip install --upgrade pip

|

||||

ENV PROJ_DIR=/usr

|

||||

RUN apk add proj proj-dev

|

||||

RUN apk add proj-util

|

||||

|

||||

|

||||

# Install any extensions needed by your CKAN instance

|

||||

# - Make sure to add the plugins to CKAN__PLUGINS in the .env file

|

||||

# - Also make sure all provide all extra configuration options, either by:

|

||||

# * Adding them to the .env file (check the ckanext-envvars syntax for env vars), or

|

||||

# * Adding extra configuration scripts to /docker-entrypoint.d folder) to update

|

||||

# the CKAN config file (ckan.ini) with the `ckan config-tool` command

|

||||

#

|

||||

# See README > Extending the base images for more details

|

||||

#

|

||||

# For instance:

|

||||

#

|

||||

### XLoader ###

|

||||

#RUN pip3 install -e 'git+https://github.com/ckan/ckanext-xloader.git@master#egg=ckanext-xloader' && \

|

||||

# pip3 install -r ${APP_DIR}/src/ckanext-xloader/requirements.txt && \

|

||||

# pip3 install -U requests[security]

|

||||

|

||||

### Harvester ###

|

||||

#RUN pip3 install -e 'git+https://github.com/ckan/ckanext-harvest.git@master#egg=ckanext-harvest' && \

|

||||

# pip3 install -r ${APP_DIR}/src/ckanext-harvest/pip-requirements.txt

|

||||

# will also require gather_consumer and fetch_consumer processes running (please see https://github.com/ckan/ckanext-harvest)

|

||||

RUN pip install -e git+https://github.com/ckan/ckanext-harvest.git@v1.5.6#egg=ckanext-harvest \

|

||||

pip install -r https://raw.githubusercontent.com/ckan/ckanext-harvest/v1.5.6/requirements.txt

|

||||

|

||||

### Scheming ###

|

||||

#RUN pip3 install -e 'git+https://github.com/ckan/ckanext-scheming.git@master#egg=ckanext-scheming'

|

||||

|

||||

### Pages ###

|

||||

#RUN pip3 install -e git+https://github.com/ckan/ckanext-pages.git#egg=ckanext-pages

|

||||

|

||||

### DCAT ###

|

||||

RUN pip install -e git+https://github.com/ckan/ckanext-dcat.git@v1.6.0#egg=ckanext-dcat && \

|

||||

pip install -r https://raw.githubusercontent.com/ckan/ckanext-dcat/v1.6.0/requirements.txt

|

||||

|

||||

|

||||

### SPATIAL ###

|

||||

RUN pip install pyproj==3.6.1

|

||||

RUN pip install geos

|

||||

RUN apk add py3-pip

|

||||

RUN pip install pymap3d

|

||||

RUN apk --update add build-base libxslt-dev

|

||||

|

||||

RUN apk add --virtual .build-deps \

|

||||

--repository http://dl-cdn.alpinelinux.org/alpine/edge/testing \

|

||||

--repository http://dl-cdn.alpinelinux.org/alpine/edge/main \

|

||||

gcc libc-dev geos-dev geos && \

|

||||

runDeps="$(scanelf --needed --nobanner --recursive /usr/local \

|

||||

| awk '{ gsub(/,/, "\nso:", $2); print "so:" $2 }' \

|

||||

| xargs -r apk info --installed \

|

||||

| sort -u)" && \

|

||||

apk add --virtual .rundeps $runDeps

|

||||

|

||||

RUN geos-config --cflags

|

||||

|

||||

#RUN pip install --disable-pip-version-check shapely

|

||||

|

||||

#RUN apk del build-base python3-dev && \

|

||||

# rm -rf /var/cache/apk/*

|

||||

RUN apk add --no-cache python3-dev libstdc++ && \

|

||||

apk add --no-cache g++ && \

|

||||

ln -s /usr/include/locale.h /usr/include/xlocale.h && \

|

||||

pip3 install numpy && \

|

||||

pip3 install pandas

|

||||

|

||||

RUN pip install shapely

|

||||

|

||||

RUN pip install -e git+https://github.com/ckan/ckanext-spatial.git@v2.1.1#egg=ckanext-spatial

|

||||

RUN pip install -r https://raw.githubusercontent.com/ckan/ckanext-spatial/v2.1.1/requirements.txt

|

||||

|

||||

RUN pip install WebHelpers2 \

|

||||

pip install xmltodict \

|

||||

pip install pyqrcode

|

||||

|

||||

# Clone the extension(s) your are writing for your own project in the `src` folder

|

||||

# to get them mounted in this image at runtime

|

||||

|

||||

# Copy custom initialization scripts

|

||||

COPY docker-entrypoint.d/* /docker-entrypoint.d/

|

||||

|

||||

# Apply any patches needed to CKAN core or any of the built extensions (not the

|

||||

# runtime mounted ones)

|

||||

COPY patches ${APP_DIR}/patches

|

||||

|

||||

RUN for d in $APP_DIR/patches/*; do \

|

||||

if [ -d $d ]; then \

|

||||

for f in `ls $d/*.patch | sort -g`; do \

|

||||

cd $SRC_DIR/`basename "$d"` && echo "$0: Applying patch $f to $SRC_DIR/`basename $d`"; patch -p1 < "$f" ; \

|

||||

done ; \

|

||||

fi ; \

|

||||

done

|

||||

|

|

@ -0,0 +1,12 @@

|

|||

#!/bin/bash

|

||||

|

||||

if [[ $CKAN__PLUGINS == *"datapusher"* ]]; then

|

||||

# Datapusher settings have been configured in the .env file

|

||||

# Set API token if necessary

|

||||

if [ -z "$CKAN__DATAPUSHER__API_TOKEN" ] ; then

|

||||

echo "Set up ckan.datapusher.api_token in the CKAN config file"

|

||||

ckan config-tool $CKAN_INI "ckan.datapusher.api_token=$(ckan -c $CKAN_INI user token add ckan_admin datapusher | tail -n 1 | tr -d '\t')"

|

||||

fi

|

||||

else

|

||||

echo "Not configuring DataPusher"

|

||||

fi

|

||||

|

|

@ -0,0 +1,4 @@

|

|||

Use scripts in this folder to run extra initialization steps in your custom CKAN images.

|

||||

Any file with `.sh` or `.py` extension will be executed just after the main initialization

|

||||

script (`prerun.py`) is executed and just before the web server and supervisor processes are

|

||||

started.

|

||||

|

|

@ -0,0 +1 @@

|

|||

test

|

||||

|

|

@ -0,0 +1,212 @@

|

|||

import os

|

||||

import sys

|

||||

import subprocess

|

||||

import psycopg2

|

||||

try:

|

||||

from urllib.request import urlopen

|

||||

from urllib.error import URLError

|

||||

except ImportError:

|

||||

from urllib2 import urlopen

|

||||

from urllib2 import URLError

|

||||

|

||||

import time

|

||||

import re

|

||||

import json

|

||||

|

||||

ckan_ini = os.environ.get("CKAN_INI", "/srv/app/ckan.ini")

|

||||

|

||||

RETRY = 5

|

||||

|

||||

|

||||

def update_plugins():

|

||||

|

||||

plugins = os.environ.get("CKAN__PLUGINS", "")

|

||||

print(("[prerun] Setting the following plugins in {}:".format(ckan_ini)))

|

||||

print(plugins)

|

||||

cmd = ["ckan", "config-tool", ckan_ini, "ckan.plugins = {}".format(plugins)]

|

||||

subprocess.check_output(cmd, stderr=subprocess.STDOUT)

|

||||

print("[prerun] Plugins set.")

|

||||

|

||||

|

||||

def check_main_db_connection(retry=None):

|

||||

|

||||

conn_str = os.environ.get("CKAN_SQLALCHEMY_URL")

|

||||

if not conn_str:

|

||||

print("[prerun] CKAN_SQLALCHEMY_URL not defined, not checking db")

|

||||

return check_db_connection(conn_str, retry)

|

||||

|

||||

|

||||

def check_datastore_db_connection(retry=None):

|

||||

|

||||

conn_str = os.environ.get("CKAN_DATASTORE_WRITE_URL")

|

||||

if not conn_str:

|

||||

print("[prerun] CKAN_DATASTORE_WRITE_URL not defined, not checking db")

|

||||

return check_db_connection(conn_str, retry)

|

||||

|

||||

|

||||

def check_db_connection(conn_str, retry=None):

|

||||

|

||||

if retry is None:

|

||||

retry = RETRY

|

||||

elif retry == 0:

|

||||

print("[prerun] Giving up after 5 tries...")

|

||||

sys.exit(1)

|

||||

|

||||

try:

|

||||

connection = psycopg2.connect(conn_str)

|

||||

|

||||

except psycopg2.Error as e:

|

||||

print(str(e))

|

||||

print("[prerun] Unable to connect to the database, waiting...")

|

||||

time.sleep(10)

|

||||

check_db_connection(conn_str, retry=retry - 1)

|

||||

else:

|

||||

connection.close()

|

||||

|

||||

|

||||

def check_solr_connection(retry=None):

|

||||

|

||||

if retry is None:

|

||||

retry = RETRY

|

||||

elif retry == 0:

|

||||

print("[prerun] Giving up after 5 tries...")

|

||||

sys.exit(1)

|

||||

|

||||

url = os.environ.get("CKAN_SOLR_URL", "")

|

||||

search_url = '{url}/schema/name?wt=json'.format(url=url)

|

||||

|

||||

try:

|

||||

connection = urlopen(search_url)

|

||||

except URLError as e:

|

||||

print(str(e))

|

||||

print("[prerun] Unable to connect to solr, waiting...")

|

||||

time.sleep(10)

|

||||

check_solr_connection(retry=retry - 1)

|

||||

else:

|

||||

import re

|

||||

conn_info = connection.read()

|

||||

schema_name = json.loads(conn_info)

|

||||

if 'ckan' in schema_name['name']:

|

||||

print('[prerun] Succesfully connected to solr and CKAN schema loaded')

|

||||

else:

|

||||

print('[prerun] Succesfully connected to solr, but CKAN schema not found')

|

||||

|

||||

|

||||

def init_db():

|

||||

|

||||

db_command = ["ckan", "-c", ckan_ini, "db", "init"]

|

||||

print("[prerun] Initializing or upgrading db - start")

|

||||

try:

|

||||

subprocess.check_output(db_command, stderr=subprocess.STDOUT)

|

||||

print("[prerun] Initializing or upgrading db - end")

|

||||

except subprocess.CalledProcessError as e:

|

||||

if "OperationalError" in e.output:

|

||||

print(e.output)

|

||||

print("[prerun] Database not ready, waiting a bit before exit...")

|

||||

time.sleep(5)

|

||||

sys.exit(1)

|

||||

else:

|

||||

print(e.output)

|

||||

raise e

|

||||

|

||||

|

||||

def init_datastore_db():

|

||||

|

||||

conn_str = os.environ.get("CKAN_DATASTORE_WRITE_URL")

|

||||

if not conn_str:

|

||||

print("[prerun] Skipping datastore initialization")

|

||||

return

|

||||

|

||||

datastore_perms_command = ["ckan", "-c", ckan_ini, "datastore", "set-permissions"]

|

||||

|

||||

connection = psycopg2.connect(conn_str)

|

||||

cursor = connection.cursor()

|

||||

|

||||

print("[prerun] Initializing datastore db - start")

|

||||

try:

|

||||

datastore_perms = subprocess.Popen(

|

||||

datastore_perms_command, stdout=subprocess.PIPE

|

||||

)

|

||||

|

||||

perms_sql = datastore_perms.stdout.read()

|

||||

# Remove internal pg command as psycopg2 does not like it

|

||||

perms_sql = re.sub(b'\\\\connect "(.*)"', b"", perms_sql)

|

||||

cursor.execute(perms_sql)

|

||||

for notice in connection.notices:

|

||||

print(notice)

|

||||

|

||||

connection.commit()

|

||||

|

||||

print("[prerun] Initializing datastore db - end")

|

||||

print(datastore_perms.stdout.read())

|

||||

except psycopg2.Error as e:

|

||||

print("[prerun] Could not initialize datastore")

|

||||

print(str(e))

|

||||

|

||||

except subprocess.CalledProcessError as e:

|

||||

if "OperationalError" in e.output:

|

||||

print(e.output)

|

||||

print("[prerun] Database not ready, waiting a bit before exit...")

|

||||

time.sleep(5)

|

||||

sys.exit(1)

|

||||

else:

|

||||

print(e.output)

|

||||

raise e

|

||||

finally:

|

||||

cursor.close()

|

||||

connection.close()

|

||||

|

||||

|

||||

def create_sysadmin():

|

||||

|

||||

name = os.environ.get("CKAN_SYSADMIN_NAME")

|

||||

password = os.environ.get("CKAN_SYSADMIN_PASSWORD")

|

||||

email = os.environ.get("CKAN_SYSADMIN_EMAIL")

|

||||

|

||||

if name and password and email:

|

||||

|

||||

# Check if user exists

|

||||

command = ["ckan", "-c", ckan_ini, "user", "show", name]

|

||||

|

||||

out = subprocess.check_output(command)

|

||||

if b"User:None" not in re.sub(b"\s", b"", out):

|

||||

print("[prerun] Sysadmin user exists, skipping creation")

|

||||

return

|

||||

|

||||

# Create user

|

||||

command = [

|

||||

"ckan",

|

||||

"-c",

|

||||

ckan_ini,

|

||||

"user",

|

||||

"add",

|

||||

name,

|

||||

"password=" + password,

|

||||

"email=" + email,

|

||||

]

|

||||

|

||||

subprocess.call(command)

|

||||

print("[prerun] Created user {0}".format(name))

|

||||

|

||||

# Make it sysadmin

|

||||

command = ["ckan", "-c", ckan_ini, "sysadmin", "add", name]

|

||||

|

||||

subprocess.call(command)

|

||||

print("[prerun] Made user {0} a sysadmin".format(name))

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

|

||||

maintenance = os.environ.get("MAINTENANCE_MODE", "").lower() == "true"

|

||||

|

||||

if maintenance:

|

||||

print("[prerun] Maintenance mode, skipping setup...")

|

||||

else:

|

||||

check_main_db_connection()

|

||||

init_db()

|

||||

update_plugins()

|

||||

check_datastore_db_connection()

|

||||

init_datastore_db()

|

||||

check_solr_connection()

|

||||

create_sysadmin()

|

||||

|

||||

|

|

@ -0,0 +1,57 @@

|

|||

#!/bin/sh

|

||||

|

||||

# Add ckan.datapusher.api_token to the CKAN config file (updated with corrected value later)

|

||||

ckan config-tool $CKAN_INI ckan.datapusher.api_token=xxx

|

||||

|

||||

# Set up the Secret key used by Beaker and Flask

|

||||

# This can be overriden using a CKAN___BEAKER__SESSION__SECRET env var

|

||||

if grep -E "beaker.session.secret ?= ?$" ckan.ini

|

||||

then

|

||||

echo "Setting beaker.session.secret in ini file"

|

||||

ckan config-tool $CKAN_INI "beaker.session.secret=$(python3 -c 'import secrets; print(secrets.token_urlsafe())')"

|

||||

ckan config-tool $CKAN_INI "WTF_CSRF_SECRET_KEY=$(python3 -c 'import secrets; print(secrets.token_urlsafe())')"

|

||||

JWT_SECRET=$(python3 -c 'import secrets; print("string:" + secrets.token_urlsafe())')

|

||||

ckan config-tool $CKAN_INI "api_token.jwt.encode.secret=${JWT_SECRET}"

|

||||

ckan config-tool $CKAN_INI "api_token.jwt.decode.secret=${JWT_SECRET}"

|

||||

fi

|

||||

|

||||

# Run the prerun script to init CKAN and create the default admin user

|

||||

sudo -u ckan -EH python3 prerun.py

|

||||

|

||||

echo "Set up ckan.datapusher.api_token in the CKAN config file"

|

||||

ckan config-tool $CKAN_INI "ckan.datapusher.api_token=$(ckan -c $CKAN_INI user token add ckan_admin datapusher | tail -n 1 | tr -d '\t')"

|

||||

|

||||

# Run any startup scripts provided by images extending this one

|

||||

if [[ -d "/docker-entrypoint.d" ]]

|

||||

then

|

||||

for f in /docker-entrypoint.d/*; do

|

||||

case "$f" in

|

||||

*.sh) echo "$0: Running init file $f"; . "$f" ;;

|

||||

*.py) echo "$0: Running init file $f"; python3 "$f"; echo ;;

|

||||

*) echo "$0: Ignoring $f (not an sh or py file)" ;;

|

||||

esac

|

||||

echo

|

||||

done

|

||||

fi

|

||||

|

||||

# Set the common uwsgi options

|

||||

UWSGI_OPTS="--plugins http,python \

|

||||

--socket /tmp/uwsgi.sock \

|

||||

--wsgi-file /srv/app/wsgi.py \

|

||||

--module wsgi:application \

|

||||

--uid 92 --gid 92 \

|

||||

--http 0.0.0.0:5000 \

|

||||

--master --enable-threads \

|

||||

--lazy-apps \

|

||||

-p 2 -L -b 32768 --vacuum \

|

||||

--harakiri $UWSGI_HARAKIRI"

|

||||

|

||||

if [ $? -eq 0 ]

|

||||

then

|

||||

# Start supervisord

|

||||

supervisord --configuration /etc/supervisord.conf &

|

||||

# Start uwsgi

|

||||

sudo -u ckan -EH uwsgi $UWSGI_OPTS

|

||||

else

|

||||

echo "[prerun] failed...not starting CKAN."

|

||||

fi

|

||||

|

|

@ -0,0 +1,99 @@

|

|||

#!/bin/sh

|

||||

|

||||

# Install any local extensions in the src_extensions volume

|

||||

echo "Looking for local extensions to install..."

|

||||

echo "Extension dir contents:"

|

||||

ls -la $SRC_EXTENSIONS_DIR

|

||||

for i in $SRC_EXTENSIONS_DIR/*

|

||||

do

|

||||

if [ -d $i ];

|

||||

then

|

||||

|

||||

if [ -f $i/pip-requirements.txt ];

|

||||

then

|

||||

pip install -r $i/pip-requirements.txt

|

||||

echo "Found requirements file in $i"

|

||||

fi

|

||||

if [ -f $i/requirements.txt ];

|

||||

then

|

||||

pip install -r $i/requirements.txt

|

||||

echo "Found requirements file in $i"

|

||||

fi

|

||||

if [ -f $i/dev-requirements.txt ];

|

||||

then

|

||||

pip install -r $i/dev-requirements.txt

|

||||

echo "Found dev-requirements file in $i"

|

||||

fi

|

||||

if [ -f $i/setup.py ];

|

||||

then

|

||||

cd $i

|

||||

python3 $i/setup.py develop

|

||||

echo "Found setup.py file in $i"

|

||||

cd $APP_DIR

|

||||

fi

|

||||

|

||||

# Point `use` in test.ini to location of `test-core.ini`

|

||||

if [ -f $i/test.ini ];

|

||||

then

|

||||

echo "Updating \`test.ini\` reference to \`test-core.ini\` for plugin $i"

|

||||

ckan config-tool $i/test.ini "use = config:../../src/ckan/test-core.ini"

|

||||

fi

|

||||

fi

|

||||

done

|

||||

|

||||

# Set debug to true

|

||||

echo "Enabling debug mode"

|

||||

ckan config-tool $CKAN_INI -s DEFAULT "debug = true"

|

||||

|

||||

# Add ckan.datapusher.api_token to the CKAN config file (updated with corrected value later)

|

||||

ckan config-tool $CKAN_INI ckan.datapusher.api_token=xxx

|

||||

|

||||

# Set up the Secret key used by Beaker and Flask

|

||||

# This can be overriden using a CKAN___BEAKER__SESSION__SECRET env var

|

||||

if grep -E "beaker.session.secret ?= ?$" ckan.ini

|

||||

then

|

||||

echo "Setting beaker.session.secret in ini file"

|

||||

ckan config-tool $CKAN_INI "beaker.session.secret=$(python3 -c 'import secrets; print(secrets.token_urlsafe())')"

|

||||

ckan config-tool $CKAN_INI "WTF_CSRF_SECRET_KEY=$(python3 -c 'import secrets; print(secrets.token_urlsafe())')"

|

||||

JWT_SECRET=$(python3 -c 'import secrets; print("string:" + secrets.token_urlsafe())')

|

||||

ckan config-tool $CKAN_INI "api_token.jwt.encode.secret=${JWT_SECRET}"

|

||||

ckan config-tool $CKAN_INI "api_token.jwt.decode.secret=${JWT_SECRET}"

|

||||

fi

|

||||

|

||||

# Update the plugins setting in the ini file with the values defined in the env var

|

||||

echo "Loading the following plugins: $CKAN__PLUGINS"

|

||||

ckan config-tool $CKAN_INI "ckan.plugins = $CKAN__PLUGINS"

|

||||

|

||||

# Update test-core.ini DB, SOLR & Redis settings

|

||||

echo "Loading test settings into test-core.ini"

|

||||

ckan config-tool $SRC_DIR/ckan/test-core.ini \

|

||||

"sqlalchemy.url = $TEST_CKAN_SQLALCHEMY_URL" \

|

||||

"ckan.datastore.write_url = $TEST_CKAN_DATASTORE_WRITE_URL" \

|

||||

"ckan.datastore.read_url = $TEST_CKAN_DATASTORE_READ_URL" \

|

||||

"solr_url = $TEST_CKAN_SOLR_URL" \

|

||||

"ckan.redis.url = $TEST_CKAN_REDIS_URL"

|

||||

|

||||

# Run the prerun script to init CKAN and create the default admin user

|

||||

sudo -u ckan -EH python3 prerun.py

|

||||

|

||||

echo "Set up ckan.datapusher.api_token in the CKAN config file"

|

||||

ckan config-tool $CKAN_INI "ckan.datapusher.api_token=$(ckan -c $CKAN_INI user token add ckan_admin datapusher | tail -n 1 | tr -d '\t')"

|

||||

|

||||

# Run any startup scripts provided by images extending this one

|

||||

if [[ -d "/docker-entrypoint.d" ]]

|

||||

then

|

||||

for f in /docker-entrypoint.d/*; do

|

||||

case "$f" in

|

||||

*.sh) echo "$0: Running init file $f"; . "$f" ;;

|

||||

*.py) echo "$0: Running init file $f"; python3 "$f"; echo ;;

|

||||

*) echo "$0: Ignoring $f (not an sh or py file)" ;;

|

||||

esac

|

||||

echo

|

||||

done

|

||||

fi

|

||||

|

||||

# Start supervisord

|

||||

supervisord --configuration /etc/supervisord.conf &

|

||||

|

||||

# Start the development server with automatic reload

|

||||

sudo -u ckan -EH ckan -c $CKAN_INI run -H 0.0.0.0

|

||||

|

|

@ -0,0 +1,71 @@

|

|||

version: "3"

|

||||

|

||||

volumes:

|

||||

ckan_storage:

|

||||

pg_data:

|

||||

solr_data:

|

||||

|

||||

services:

|

||||

|

||||

ckan-dev:

|

||||

build:

|

||||

context: ckan/

|

||||

dockerfile: Dockerfile.dev

|

||||

args:

|

||||

- TZ=${TZ}

|

||||

env_file:

|

||||

- .env

|

||||

depends_on:

|

||||

db:

|

||||

condition: service_healthy

|

||||

solr:

|

||||

condition: service_healthy

|

||||

redis:

|

||||

condition: service_healthy

|

||||

ports:

|

||||

- "0.0.0.0:${CKAN_PORT_HOST}:${CKAN_PORT}"

|

||||

volumes:

|

||||

- ckan_storage:/var/lib/ckan

|

||||

- ./src:/srv/app/src_extensions

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "wget", "-qO", "/dev/null", "http://localhost:5000"]

|

||||

|

||||

datapusher:

|

||||

image: ckan/ckan-base-datapusher:${DATAPUSHER_VERSION}

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "wget", "-qO", "/dev/null", "http://localhost:8800"]

|

||||

|

||||

db:

|

||||

build:

|

||||

context: postgresql/

|

||||

environment:

|

||||

- POSTGRES_USER

|

||||

- POSTGRES_PASSWORD

|

||||

- POSTGRES_DB

|

||||

- CKAN_DB_USER

|

||||

- CKAN_DB_PASSWORD

|

||||

- CKAN_DB

|

||||

- DATASTORE_READONLY_USER

|

||||

- DATASTORE_READONLY_PASSWORD

|

||||

- DATASTORE_DB

|

||||

volumes:

|

||||

- pg_data:/var/lib/postgresql/data

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "pg_isready", "-U", "${POSTGRES_USER}", "-d", "${POSTGRES_DB}"]

|

||||

|

||||

solr:

|

||||

image: ckan/ckan-solr:${SOLR_IMAGE_VERSION}

|

||||

volumes:

|

||||

- solr_data:/var/solr

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "wget", "-qO", "/dev/null", "http://localhost:8983/solr/"]

|

||||

|

||||

redis:

|

||||

image: redis:${REDIS_VERSION}

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "redis-cli", "-e", "QUIT"]

|

||||

|

|

@ -0,0 +1,112 @@

|

|||

version: "3"

|

||||

|

||||

|

||||

volumes:

|

||||

ckan_storage:

|

||||

pg_data:

|

||||

solr_data:

|

||||

|

||||

services:

|

||||

|

||||

nginx:

|

||||

container_name: ${NGINX_CONTAINER_NAME}

|

||||

build:

|

||||

context: nginx/

|

||||

dockerfile: Dockerfile

|

||||

networks:

|

||||

- webnet

|

||||

- ckannet

|

||||

depends_on:

|

||||

ckan:

|

||||

condition: service_healthy

|

||||

ports:

|

||||

- "0.0.0.0:${NGINX_SSLPORT_HOST}:${NGINX_SSLPORT}"

|

||||

|

||||

ckan:

|

||||

container_name: ${CKAN_CONTAINER_NAME}

|

||||

build:

|

||||

context: ckan/

|

||||

dockerfile: Dockerfile

|

||||

args:

|

||||

- TZ=${TZ}

|

||||

networks:

|

||||

- ckannet

|

||||

- dbnet

|

||||

- solrnet

|

||||

- redisnet

|

||||

env_file:

|

||||

- .env

|

||||

depends_on:

|

||||

db:

|

||||

condition: service_healthy

|

||||

solr:

|

||||

condition: service_healthy

|

||||

redis:

|

||||

condition: service_healthy

|

||||

volumes:

|

||||

- ckan_storage:/var/lib/ckan

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "wget", "-qO", "/dev/null", "http://localhost:5000"]

|

||||

|

||||

datapusher:

|

||||

container_name: ${DATAPUSHER_CONTAINER_NAME}

|

||||

networks:

|

||||

- ckannet

|

||||

- dbnet

|

||||

image: ckan/ckan-base-datapusher:${DATAPUSHER_VERSION}

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "wget", "-qO", "/dev/null", "http://localhost:8800"]

|

||||

|

||||

db:

|

||||

container_name: ${POSTGRESQL_CONTAINER_NAME}

|

||||

build:

|

||||

context: postgresql/

|

||||

networks:

|

||||

- dbnet

|

||||

environment:

|

||||

- POSTGRES_USER

|

||||

- POSTGRES_PASSWORD

|

||||

- POSTGRES_DB

|

||||

- CKAN_DB_USER

|

||||

- CKAN_DB_PASSWORD

|

||||

- CKAN_DB

|

||||

- DATASTORE_READONLY_USER

|

||||

- DATASTORE_READONLY_PASSWORD

|

||||

- DATASTORE_DB

|

||||

volumes:

|

||||

- pg_data:/var/lib/postgresql/data

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "pg_isready", "-U", "${POSTGRES_USER}", "-d", "${POSTGRES_DB}"]

|

||||

|

||||

solr:

|

||||

container_name: ${SOLR_CONTAINER_NAME}

|

||||

networks:

|

||||

- solrnet

|

||||

image: ckan/ckan-solr:${SOLR_IMAGE_VERSION}

|

||||

volumes:

|

||||

- solr_data:/var/solr

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "wget", "-qO", "/dev/null", "http://localhost:8983/solr/"]

|

||||

|

||||

redis:

|

||||

container_name: ${REDIS_CONTAINER_NAME}

|

||||

image: redis:${REDIS_VERSION}

|

||||

networks:

|

||||

- redisnet

|

||||

restart: unless-stopped

|

||||

healthcheck:

|

||||

test: ["CMD", "redis-cli", "-e", "QUIT"]

|

||||

|

||||

networks:

|

||||

webnet:

|

||||

ckannet:

|

||||

solrnet:

|

||||

internal: true

|

||||

dbnet:

|

||||

internal: true

|

||||

redisnet:

|

||||

internal: true

|

||||

|

|

@ -0,0 +1,23 @@

|

|||

FROM nginx:stable-alpine

|

||||

|

||||

ENV NGINX_DIR=/etc/nginx

|

||||

|

||||

RUN apk update --no-cache && \

|

||||

apk upgrade --no-cache && \

|

||||

apk add --no-cache openssl

|

||||

|

||||

COPY setup/nginx.conf ${NGINX_DIR}/nginx.conf

|

||||

COPY setup/index.html /usr/share/nginx/html/index.html

|

||||

COPY setup/default.conf ${NGINX_DIR}/conf.d/

|

||||

|

||||

RUN mkdir -p ${NGINX_DIR}/certs

|

||||

|

||||

ENTRYPOINT \

|

||||

openssl req \

|

||||

-subj '/C=DE/ST=Berlin/L=Berlin/O=None/CN=localhost' \

|

||||

-x509 -newkey rsa:4096 \

|

||||

-nodes -keyout /etc/nginx/ssl/default_key.pem \

|

||||

-keyout ${NGINX_DIR}/certs/ckan-local.key \

|

||||

-out ${NGINX_DIR}/certs/ckan-local.crt \

|

||||

-days 365 && \

|

||||

nginx -g 'daemon off;'

|

||||

|

|

@ -0,0 +1,30 @@

|

|||

-----BEGIN CERTIFICATE-----

|

||||

MIIFIDCCAwgCCQDr3dGZoSvqMDANBgkqhkiG9w0BAQsFADBSMQswCQYDVQQGEwJE

|

||||

RTEPMA0GA1UECAwGQmVybGluMQ8wDQYDVQQHDAZCZXJsaW4xDTALBgNVBAoMBE5v

|

||||

bmUxEjAQBgNVBAMMCWxvY2FsaG9zdDAeFw0yMzAzMzEwMjM1MjZaFw0yNDAzMzAw

|

||||

MjM1MjZaMFIxCzAJBgNVBAYTAkRFMQ8wDQYDVQQIDAZCZXJsaW4xDzANBgNVBAcM

|

||||

BkJlcmxpbjENMAsGA1UECgwETm9uZTESMBAGA1UEAwwJbG9jYWxob3N0MIICIjAN

|

||||

BgkqhkiG9w0BAQEFAAOCAg8AMIICCgKCAgEApej5FEF4lCOAmmUaAr67w6Go2XZO

|

||||

crV2UoWbJQq+aC688XpSX5MaxBVK7r4MQGvwC0u/5aN50fyGQBWGeiY6/27MbGHA

|

||||

fimLtGAmHf5ys4FYtD71YYV0ekUMvlTV1flV3gdM3JItlkXR8ukqIb6WlAGv4vS3

|

||||

31QdoUyd7bGbCMmtDJ2ecnSlO5U0l9Udoqz4+cDPUMWMc1rXw9DfK/mzm+KR3iW+

|

||||

QdWwbWj+Crd/aBKiofKIscq2svRfcVisxSbPr4ib1iMEAxes3nt2cBYNQe8H2OVh

|

||||

SHbskKtaVgG5d+X+f/Mo+P6/1wrqY1JBgkegWkpcaz5mlT4tjsiudfmKsRRnKqHP

|

||||

m5qohWBZxH2MDWX1ggJsziI546a5Y0lkvazql8QUd44X/vrWnx37sCn50Dj8DRAf

|

||||

xtzNAC4doO+nIS+NC964yr6Ps4NrZE++WP5Ry6VUKhl46JSRkg6vtc27ZRrGn6LS

|

||||

AmWU/Ob6/9UaPQkWZ3A/iDnkrkBflM6wdaD/EQmb5LLou84dhZCivqEJ6/5TdB3c

|

||||

8w5muTQY2SLY9JmvECNQpfviD1IdXq7zqeH23L/hgE9i3GrYGWqGc9E3cjNht8Qt

|

||||

hF7WbRaomzbjH6ChZTtiSEw3wtf6bTLKuJjWCBDbp+GypyfDWrvJI2UFVwtH5QMv

|

||||

HYvxG4t+u+E6F2MCAwEAATANBgkqhkiG9w0BAQsFAAOCAgEAQcFH6iKXcErUuPJv

|

||||

N+EdDfJh8CTvbqnp7SXOBQ2Q66NhsTZXPrpyOzT3APoeineWhwt7YZF59m02O1Ek

|

||||

yS2qVEdAI9vDgmRvR6ryRsaEkLvnbhfTsBkN56c2oLTxqHBHooAyBKVIl0rSplW9

|

||||

EYhZ9t+08QNd/2unEipgTMFUM+JIMMzseKDwrug97tGsCftIPeWddkPchT309Lwr

|

||||

ECl2JsXX4t67oNR54hqRRvxyriSx/E8BF7rupsnaGNdNPPASoBbGVGEWumUutWMh

|

||||

PzlkyOgpl5fZ44WqYOBvBeLGGPTkM3uoaySv6GNOAxFsXJfHXCq59TL93LRn4RKs

|

||||

rik07ZabYa95JFAPUUSzMpplU4RCpE6r+MFceb1WMrDpK/4zoLLeIwmlwiHsrg/6

|

||||

L0tN1/RLVQwvpxiVqlmETlyDmqFC+McURNU1ZJU2V8efQTWhDVnB7rNVefQsbTLN

|

||||

5jaWiKMKQxva2Skf1jgVT9JOfYYCRlHpryOp9yIrqQqVxlaaDJz/Jm91Pt4QhCDj

|

||||

VhSMDYVMFtE/ylMbB1qR3MJBw0xCCG4zdZpUzvFfR6/wQ5FZ4DvXsbYnsxX/JhgV

|

||||

sTqxkdnhhR0UsxuHyxGWVPPxS+5IZdxVETcjrMVeaK9PAHyBBSI4DQHYIJWoyZ3m

|

||||

y9oQx2IvfVXpGptadU5EKWM6210=

|

||||

-----END CERTIFICATE-----

|

||||

|

|

@ -0,0 +1,52 @@

|

|||

-----BEGIN PRIVATE KEY-----

|

||||

MIIJQwIBADANBgkqhkiG9w0BAQEFAASCCS0wggkpAgEAAoICAQCl6PkUQXiUI4Ca

|

||||

ZRoCvrvDoajZdk5ytXZShZslCr5oLrzxelJfkxrEFUruvgxAa/ALS7/lo3nR/IZA

|

||||

FYZ6Jjr/bsxsYcB+KYu0YCYd/nKzgVi0PvVhhXR6RQy+VNXV+VXeB0zcki2WRdHy

|

||||

6SohvpaUAa/i9LffVB2hTJ3tsZsIya0MnZ5ydKU7lTSX1R2irPj5wM9QxYxzWtfD

|

||||

0N8r+bOb4pHeJb5B1bBtaP4Kt39oEqKh8oixyray9F9xWKzFJs+viJvWIwQDF6ze

|

||||

e3ZwFg1B7wfY5WFIduyQq1pWAbl35f5/8yj4/r/XCupjUkGCR6BaSlxrPmaVPi2O

|

||||

yK51+YqxFGcqoc+bmqiFYFnEfYwNZfWCAmzOIjnjprljSWS9rOqXxBR3jhf++taf

|

||||

HfuwKfnQOPwNEB/G3M0ALh2g76chL40L3rjKvo+zg2tkT75Y/lHLpVQqGXjolJGS

|

||||

Dq+1zbtlGsafotICZZT85vr/1Ro9CRZncD+IOeSuQF+UzrB1oP8RCZvksui7zh2F

|

||||

kKK+oQnr/lN0HdzzDma5NBjZItj0ma8QI1Cl++IPUh1ervOp4fbcv+GAT2LcatgZ

|

||||

aoZz0TdyM2G3xC2EXtZtFqibNuMfoKFlO2JITDfC1/ptMsq4mNYIENun4bKnJ8Na

|

||||

u8kjZQVXC0flAy8di/Ebi3674ToXYwIDAQABAoICAFZTZma3ujm6T0wGlwYeoCwm

|

||||

jWi5OhBNgwdlJVicwn4K85zh/MJmFGM6gQbANDfA8eGuxGaELPqp3mCx0or0IXaO

|

||||

/CbYpgP/MgXkkXDB2IS2JKWErMDVY8nK69qM4ca4OYmRWtjZ5oZuRdOSpq1wMYFJ

|

||||

b28zzgiSB+jJqNLourZT2Yra6Hq9Xswl0nu+E/F09wdc34Izh+Ttu57Tq4uCHYZa

|

||||

2XMxSFGREn+bRbPlzpEkQSLqw11fELkEljSv4xWiICZBenRtO8UwKG6K5xFjJ/rK

|

||||

mNauY3QFDQopXpOpygsszMNejk8gnkkSEOsk/ZkAE9tnHbdffJjjBWlp2fzgnty1

|

||||

72MwzlrinfOsYn6Ioe/mobGmedxQl4hiu6lI/Rl94C8HmycYRddQAg/55mTgZHBK

|

||||

kUQCnqbLl9JLFiSwbL6YupkKGJeSPoXdzJcp4v+PT8a1QRDzdge8bwXQZJ59UhEu

|

||||

EYJnYy79jBT8+UdTZxCYdmc3wuvUvOpxLihOEdr5+/6ZqQ0znoeTXNHxvzb43vRy

|

||||

W7XgaP4pQH6/sD8mSRGHWNdG0hX9Fjc/C9rQzwMJwCAcGRTN7CjyOCMMbkmoCy5v

|

||||

UDV22WzZqlUUZijuinLVV2Gn1WSXLWPSOJUFpiVgvrnycItroRoOfOAQOt20HnQu

|

||||

b/R2P0xCKJCmHqBICpRhAoIBAQDZYD1ZMpvIX2ZyGkjUpy2Zm4ofly0mf/uzMnhv

|

||||

y76aedZ+uSxlDLnlHTbL2sVOQhq6aRDYAWKYxekDf5FxZ8SXx11Dn/QHvGcZ/Hcl

|

||||

LrhsGvVKPNPOMsXT8o0g7/BaS3hrzzOgHPRPl6dUZ78C4jX/KLjmNoqeO+FYqqB9

|

||||

15yiGIDwJAanhoBOD7gonM9D9f3O6H5KzaDiggem+xSF3RVJyxPTWUEEa9hGZVcx

|

||||

/QZuEe8W79x3Tk0L5I9/Lw6qfN/JFuN9BY4zTLGar6TtDN2LRIfHm+8eHVyFASxO

|

||||

RqvTe9MfJwDRuwrULhaCtLjlLpU2HMjhZDlfG3z6EebulZJ1AoIBAQDDY7WEiksY

|

||||

/5eCyWp1oWTxqnmNKhxD6vd1nRviDEpVTZ0lsHdtiKzpkiEXbvkKjbFbm7wEcsNx

|

||||

HLKL4Q7Az89iR++dN4QkaqzsdnyRVc9W6UlUk5T2FqKoqsYcm0tG7fgIlXZ0PHgs

|

||||

jG9cHXIne/QvFQ15xfAv/6bAi0rjfntGf47TaZT6Y56sQQ14OvYemjYviWiBaylQ

|

||||

kbj3k+mAEGI8n71A2fWndk133HiLJ1EyWLUc8DEcB4kqwJHIYJGEYkwRWwoJmrS2

|

||||

hRZVHsn9ar4qi/0UOodUtLpZXerfNPW/KmFCOcI1I7244UgWpOcRke19F2OOI+Eh

|

||||

mOi4aJwVEJd3AoIBACKf4MW/eO7uuzu7khRFWM8Z5mNnyipSwn3lsSdllcO3WoIu

|

||||

7rJd15J2F89a1ojDoMxGhgdSGSlqhNYo0Lr2o2rlt6ZY6R7+VJHgE/5ZNckKdj3P

|

||||

+JDkp3w+K1quvWM0mEbb50Y+tm+jIWUhbVyBOcad7u3EjEnuEdP0wcGpwWpUat1V

|

||||

b7Xph7BncpcNezpBCZ+Wit9RZ6oMujlPzxIPiB+L+Gl20xNoNjfoVn5A5nBL7QCD

|

||||

TmO2ljEpw+2nSje/0kmOmsfERcVIFxYjmiqkHPnc/Z++59StKpqI+EyzlxUFqThS

|

||||

FyBRIcVwXeeN79GZnOzUou677yOGFl8i0Nz5+C0CggEBAKTodd5snh92MVk4R/sK

|

||||

AdmaCUckoICOQtdoh40M1HwUqqqRuuqerVnhdL6Dcfv/RQ7NbS3P8rZ4AxXeGIaR

|

||||

njYUAt+NaKEXy+Uzx8UeSIXRFYwll1bwGc8De3vPcgRmeq47/6LxGnh2+tIjJCLB

|

||||

EoHeYeZCMotAWWwu5EEHkmIY7OHwPcXq6JP3v7eXA/0mKM+MSMDaQh93Lkb+9teY

|

||||

fGEwbRncG+KADbg5QyAnSfeVOR84dipzDckgiKo3Hvo9wHfxf5JFmXpm70deWhrh

|

||||

yai9SBeXonrSomkkxEQpPbRfv4CWoRwak1kEAsTh3whMQsYORH9GNxAVL23dFMcO

|

||||

ntcCggEBANhRGQvq1vxhnLkilwJ0EBocQ+KF8nZfzzOtQ194gPolRWpjVzDOeGc0

|

||||

Us4omOreNpIXNYg9ELWMgm53viEoY3GMVt1kPPGwWW/JGeGv0kqr8aM3kWm5gc2H

|

||||

eu/nM5JbOvUKxnruia9I8BvJeeTTVytRQbv4kojEkZagHKYoZJ+ox0Cx1UmXeVI/

|

||||

vCufq4wqetJFNv05SNw7r+UObbc57BIPSvR3SGYmaZYkb8Wo/dZXF+vOAySnR8Go

|

||||

3bihBMtzmspt9JQtPBDy84okQrojsfm8fyyMRZ/UtMrhvGYFd9bcMDsCY+jybRXa

|

||||

E0CmRHNQumr+KBM8UT4YtWA+recgbtA=

|

||||

-----END PRIVATE KEY-----

|

||||

|

|

@ -0,0 +1,46 @@

|

|||

server {

|

||||

#listen 80;

|

||||

#listen [::]:80;

|

||||

listen 443 ssl;

|

||||

listen [::]:443 ssl;

|

||||

server_name localhost;

|

||||

ssl_certificate /etc/nginx/certs/ckan-local.crt;

|

||||

ssl_certificate_key /etc/nginx/certs/ckan-local.key;

|

||||

|

||||

# TLS 1.2 & 1.3 only

|

||||

ssl_protocols TLSv1.2 TLSv1.3;

|

||||

|

||||

# Disable weak ciphers

|

||||

ssl_prefer_server_ciphers on;

|

||||

ssl_ciphers 'ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-CHACHA20-POLY1305:ECDHE-RSA-CHACHA20-POLY1305:ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-SHA384:ECDHE-RSA-AES256-SHA384:ECDHE-ECDSA-AES128-SHA256:ECDHE-RSA-AES128-SHA256';

|

||||

|

||||

# SSL sessions

|

||||

ssl_session_timeout 1d;

|

||||

# ssl_session_cache dfine in stream and http

|

||||

ssl_session_tickets off;

|

||||

|

||||

#access_log /var/log/nginx/host.access.log main;

|

||||

|

||||

location / {

|

||||

proxy_pass http://ckan:5000/;

|

||||

proxy_set_header X-Forwarded-For $remote_addr;

|

||||

proxy_set_header Host $host;

|

||||

#proxy_cache cache;

|

||||

proxy_cache_bypass $cookie_auth_tkt;

|

||||

proxy_no_cache $cookie_auth_tkt;

|

||||

proxy_cache_valid 30m;

|

||||

proxy_cache_key $host$scheme$proxy_host$request_uri;

|

||||

}

|

||||

|

||||

error_page 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 421 422 423 424 425 426 428 429 431 451 500 501 502 503 504 505 506 507 508 510 511 /error.html;

|

||||

|

||||

# redirect server error pages to the static page /error.html

|

||||

#

|

||||

location = /error.html {

|

||||

ssi on;

|

||||

internal;

|

||||

auth_basic off;

|

||||

root /usr/share/nginx/html;

|

||||

}

|

||||

|

||||

}

|

||||

|

|

@ -0,0 +1,22 @@

|

|||

<!DOCTYPE html>

|

||||

<html lang="en">

|

||||

<head>

|

||||

<meta charset="UTF-8">

|

||||

<meta name="viewport" content="width=device-width, initial-scale=1.0">

|

||||

<meta http-equiv="X-UA-Compatible" content="ie=edge">

|

||||

<title>CKAN Docker NGINX landing page</title>

|

||||

<style>

|

||||

h1{

|

||||

font-weight:lighter;

|

||||

font-family: Arial, Helvetica, sans-serif;

|

||||

}

|

||||

</style>

|

||||

</head>

|

||||

<body>

|

||||

|

||||

<h2>

|

||||

CKAN Docker NGINX landing page

|

||||

</h2>

|

||||

|

||||

</body>

|

||||

</html>

|

||||

|

|

@ -0,0 +1,91 @@

|

|||

|

||||

user nginx;

|

||||

worker_processes auto;

|

||||

|

||||

error_log /var/log/nginx/error.log notice;

|

||||

pid /var/run/nginx.pid;

|

||||

|

||||

|

||||

events {

|

||||

worker_connections 1024;

|

||||

}

|

||||

|

||||

|

||||

http {

|

||||

include /etc/nginx/mime.types;

|

||||

default_type application/octet-stream;

|

||||

|

||||

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

|

||||

'$status $body_bytes_sent "$http_referer" '

|

||||

'"$http_user_agent" "$http_x_forwarded_for"';

|

||||

|

||||

access_log /var/log/nginx/access.log main;

|

||||

|

||||

sendfile on;

|

||||

tcp_nopush on;

|

||||

tcp_nodelay on;

|

||||

types_hash_max_size 2048;

|

||||

keepalive_timeout 65;

|

||||

|

||||

# Don't expose Nginx version

|

||||

server_tokens off;

|

||||

|

||||

# Prevent clickjacking attacks

|

||||

add_header X-Frame-Options "SAMEORIGIN";

|

||||

|

||||

# Mitigate Cross-Site scripting attack

|

||||

add_header X-XSS-Protection "1; mode=block";

|

||||

|

||||

# Enable gzip encryption

|

||||

gzip on;

|

||||

|

||||

proxy_cache_path /tmp/nginx_cache levels=1:2 keys_zone=cache:30m max_size=250m;

|

||||

proxy_temp_path /tmp/nginx_proxy 1 2;

|

||||

|

||||

include /etc/nginx/conf.d/*.conf;

|

||||

|

||||

# Error status text

|

||||

map $status $status_text {

|

||||

400 'Bad Request';

|

||||

401 'Unauthorized';

|

||||

402 'Payment Required';

|

||||

403 'Forbidden';

|

||||

404 'Not Found';

|

||||

405 'Method Not Allowed';

|

||||

406 'Not Acceptable';

|

||||

407 'Proxy Authentication Required';

|

||||

408 'Request Timeout';

|

||||

409 'Conflict';

|

||||

410 'Gone';

|

||||

411 'Length Required';

|

||||

412 'Precondition Failed';

|

||||

413 'Payload Too Large';

|

||||

414 'URI Too Long';

|

||||

415 'Unsupported Media Type';

|

||||

416 'Range Not Satisfiable';

|

||||

417 'Expectation Failed';

|

||||

418 'I\'m a teapot';

|

||||

421 'Misdirected Request';

|

||||

422 'Unprocessable Entity';

|

||||

423 'Locked';

|

||||

424 'Failed Dependency';

|

||||

425 'Too Early';

|

||||

426 'Upgrade Required';

|

||||

428 'Precondition Required';

|

||||

429 'Too Many Requests';

|

||||